Let's be honest: writing infrastructure code is powerful, but it's also easy to mess up. A single misconfiguration in a CloudFormation template can create a serious security hole.

In Part 1, I built a simple Python tool to understand policy-as-code fundamentals. Now it's time to level up: I'm integrating production-grade tools into a real CI/CD pipeline.

I wanted to build a safety net for my CI/CD pipeline that would not only catch these issues but also tell me exactly how to fix them. I combined the strict enforcement of Open Policy Agent (OPA) with the AI-powered remediation advice from Amazon Q Developer to create a hybrid security workflow.

Here's how the workflow looks as a decision flow:

Developer opens PR

↓

GitHub Actions runs OPA/Conftest

↓

Policy Pass?

│

┌─────┴─────┐

│ │

↓ ↓

YES NO

│ │

↓ ↓

PR Check PR Check

Passes ✅ Fails ❌

│ │

↓ ↓

Ready to Amazon Q posts

Merge remediation advice

│

↓

Developer makes changes

and pushes new commit

│

↓

PR Check

Passes ✅

│

↓

Ready to Merge

The Goal: A Guard That's Also a Guide

My mission was to create a workflow that did two things perfectly. This combination of a strict enforcer and a helpful guide became the core of my solution.

- Detect & Block: Automatically run security checks on every pull request and fail the build if a policy was violated. No exceptions.

- Advise & Remediate: Give developers instant, clear, and actionable advice on how to fix the exact problem that was found.

Show Me the Code!

Here’s a look at the key pieces I built.

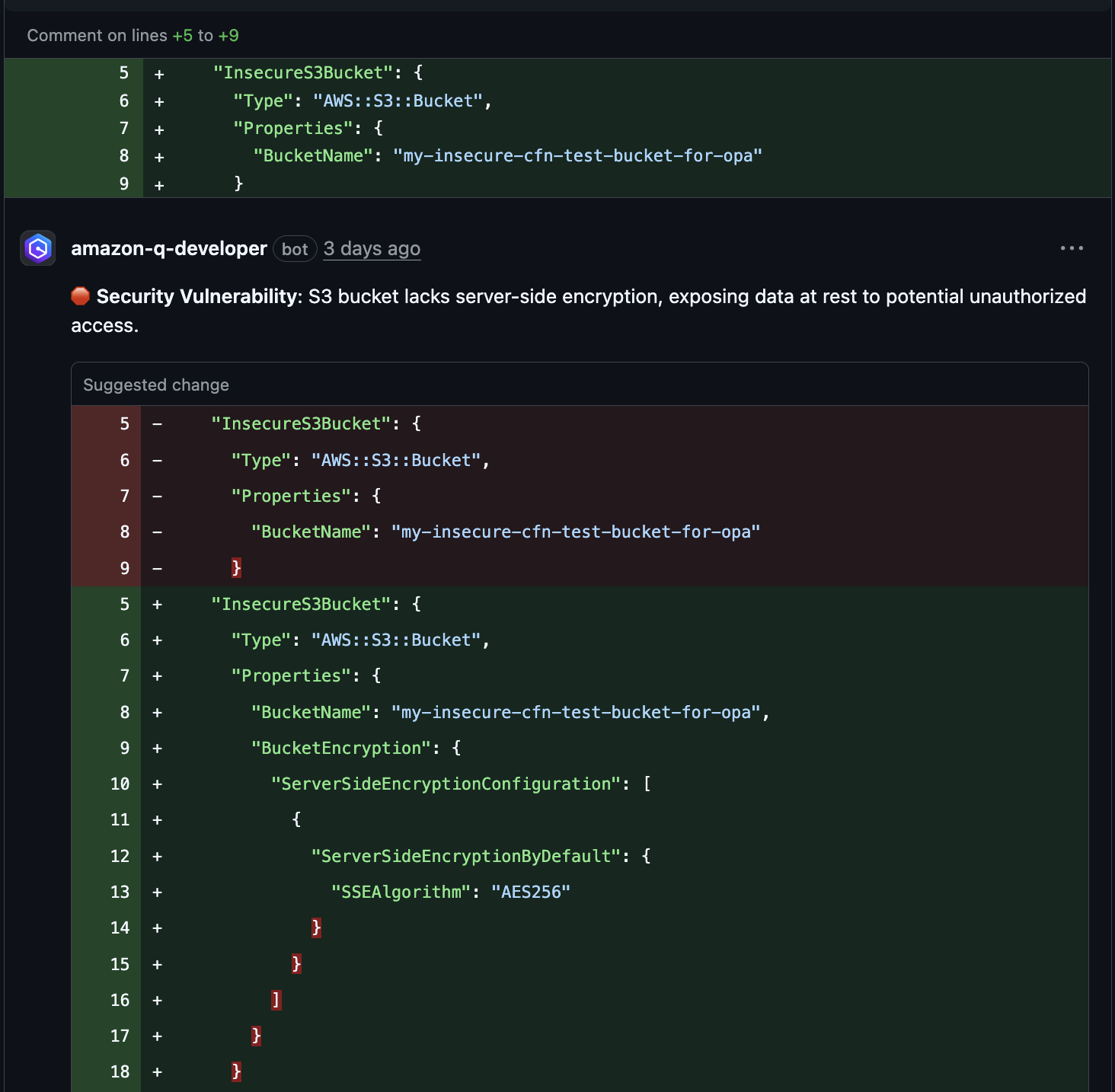

First, my insecure CloudFormation template (insecure-template.json). This template contains a critical security misconfiguration: an S3 bucket with no encryption enabled.

{

"AWSTemplateFormatVersion": "2010-09-09",

"Description": "A CloudFormation template with an insecure S3 bucket (no encryption).",

"Resources": {

"InsecureS3Bucket": {

"Type": "AWS::S3::Bucket",

"Properties": {

"VersioningConfiguration": {

"Status": "Enabled"

}

}

}

}

}

Notice what's missing? There's no BucketEncryption property. It gets caught by our policy enforcement.

For comparison, here's my secure CloudFormation template (template.json) that passes all our security checks:

{

"AWSTemplateFormatVersion": "2010-09-09",

"Description": "A CloudFormation template with a secure S3 bucket that passes OPA checks.",

"Resources": {

"SecureS3Bucket": {

"Type": "AWS::S3::Bucket",

"Properties": {

"BucketEncryption": {

"ServerSideEncryptionConfiguration": [

{

"ServerSideEncryptionByDefault": {

"SSEAlgorithm": "aws:kms"

}

}

]

},

"VersioningConfiguration": {

"Status": "Enabled"

},

"PublicAccessBlockConfiguration": {

"BlockPublicAcls": true,

"BlockPublicPolicy": true,

"IgnorePublicAcls": true,

"RestrictPublicBuckets": true

}

}

}

}

}

The secure template includes:

BucketEncryptionwith KMS encryptionPublicAccessBlockConfigurationto prevent public access- Proper versioning configuration

Next, here is my final Rego policy (cloudformation.rego) that catches these issues. Rego is OPA's declarative policy language, which can feel strange at first but is incredibly powerful for writing complex rules. After my learning journey, I ended up with a clear, reusable set of rules and helper functions.

package main

# Deny if S3 bucket does not have KMS encryption enabled

deny[msg] {

# Find all S3 bucket resources in the template

resource := input.Resources[logical_id]

resource.Type == "AWS::S3::Bucket"

# Check if the required encryption property is missing

not has_kms_encryption(resource)

# Generate a clear violation message for the developer

msg := sprintf("S3 bucket '%s' must use KMS for server-side encryption.", [logical_id])

}

# Deny if a security group allows unrestricted SSH access

deny[msg] {

# Find all security group ingress rules

resource := input.Resources[logical_id]

resource.Type == "AWS::EC2::SecurityGroupIngress"

properties := resource.Properties

# Check if the rule allows SSH traffic (port 22)

properties.IpProtocol == "tcp"

properties.FromPort <= 22

properties.ToPort >= 22

# Check if the rule allows traffic from anywhere on the internet

properties.CidrIp == "0.0.0.0/0"

# Generate a clear violation message for the developer

msg := sprintf("Security group ingress '%s' allows unrestricted SSH access.", [logical_id])

}

# --- Helper Functions ---

# This helper function checks if a bucket has KMS encryption configured

has_kms_encryption(resource) {

resource.Properties.BucketEncryption

resource.Properties.BucketEncryption.ServerSideEncryptionConfiguration[_].ServerSideEncryptionByDefault.SSEAlgorithm == "aws:kms"

}

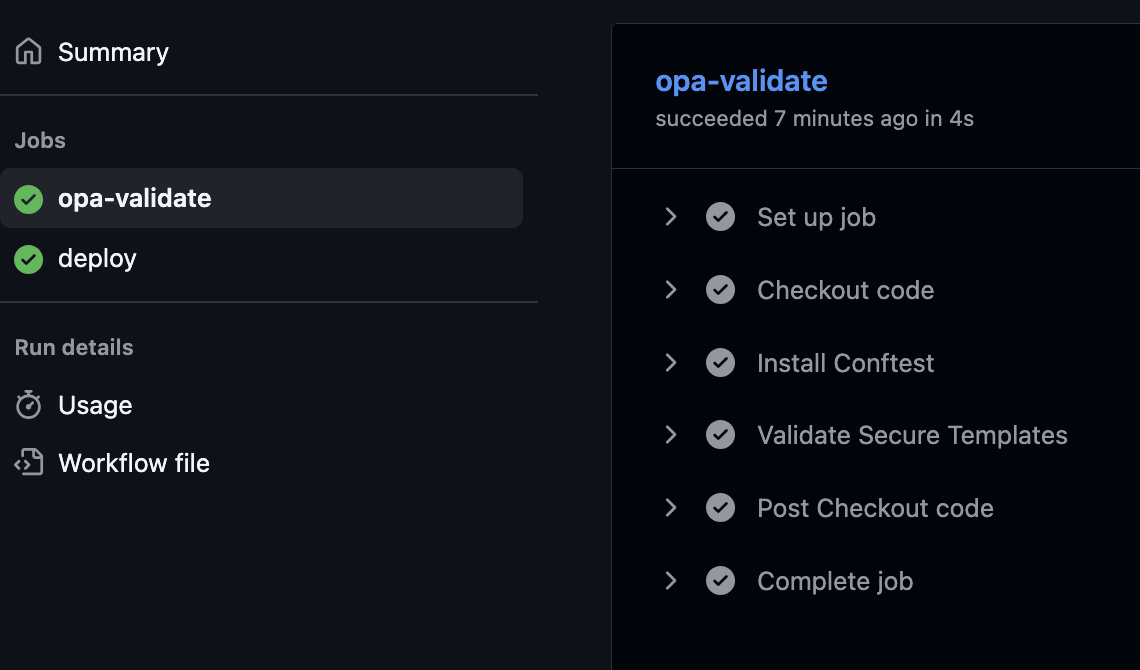

Finally, my production deployment workflow (.github/workflows/validate.yml). This workflow validates secure templates and deploys them to AWS when merged to main.

name: Deploy Secure Infrastructure

on:

pull_request:

paths: ['templates/secure/**', '.github/workflows/validate.yml', 'policies/**']

push:

branches: [main]

jobs:

opa-validate:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- name: Install Conftest

run: |

wget https://github.com/open-policy-agent/conftest/releases/download/v0.39.0/conftest_0.39.0_Linux_x86_64.tar.gz

tar xzf conftest_0.39.0_Linux_x86_64.tar.gz

sudo mv conftest /usr/local/bin

- name: Validate Secure Templates

run: conftest test templates/secure/*.json --policy policies/cloudformation.rego -o table

deploy:

needs: opa-validate

runs-on: ubuntu-latest

if: github.ref == 'refs/heads/main'

steps:

- uses: actions/checkout@v3

- uses: aws-actions/configure-aws-credentials@v4

with:

aws-access-key-id: MY_AWS_ACCESS_KEY_ID

aws-secret-access-key: MY_AWS_SECRET_ACCESS_KEY

aws-region: us-east-1

- name: Deploy to AWS

run: aws cloudformation deploy --template-file templates/secure/template.json --stack-name my-secure-stack

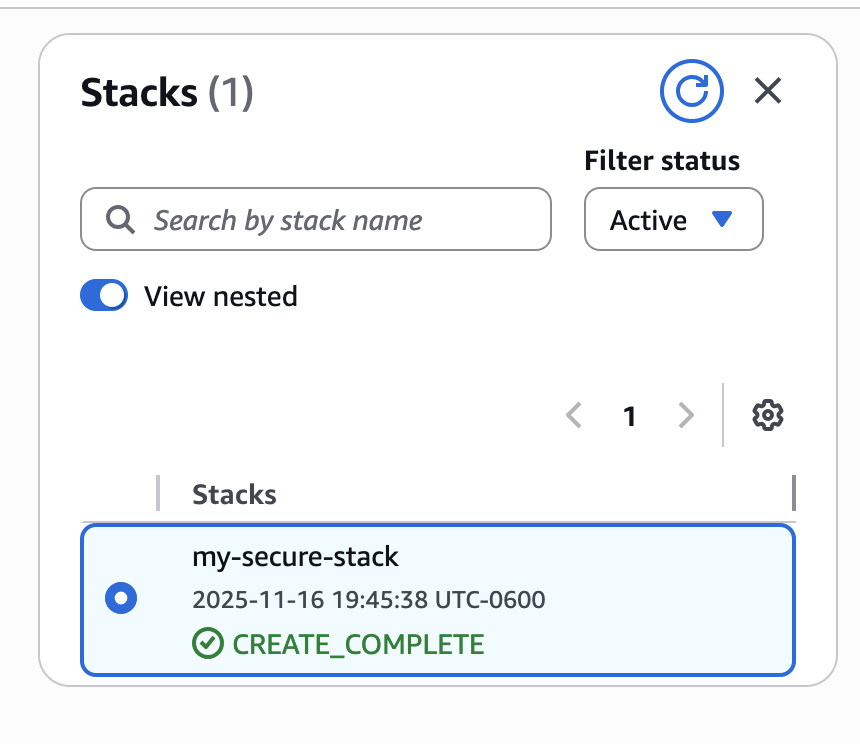

Here's the CloudFormation stack that was created:

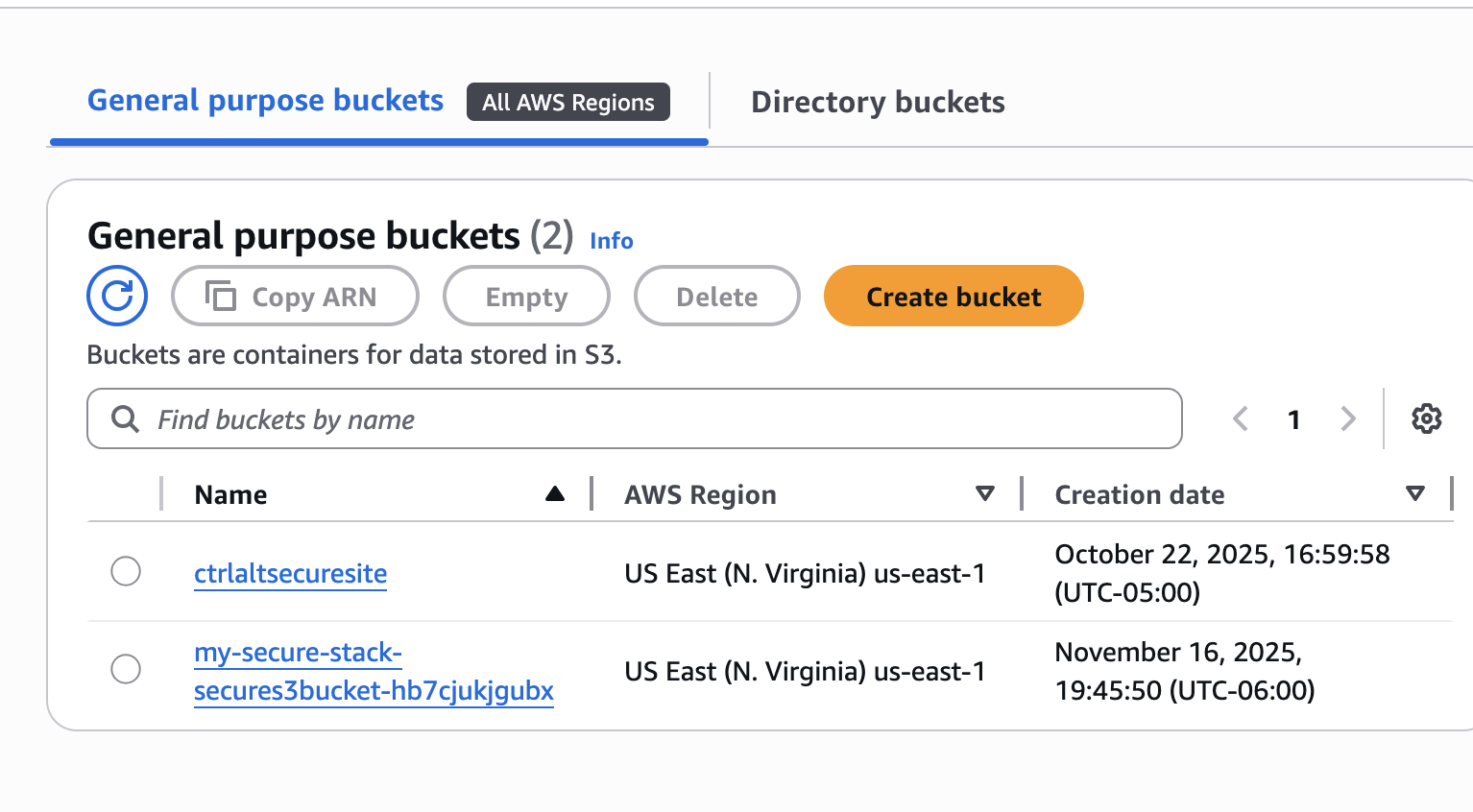

And here's the secure S3 bucket with KMS encryption enabled:

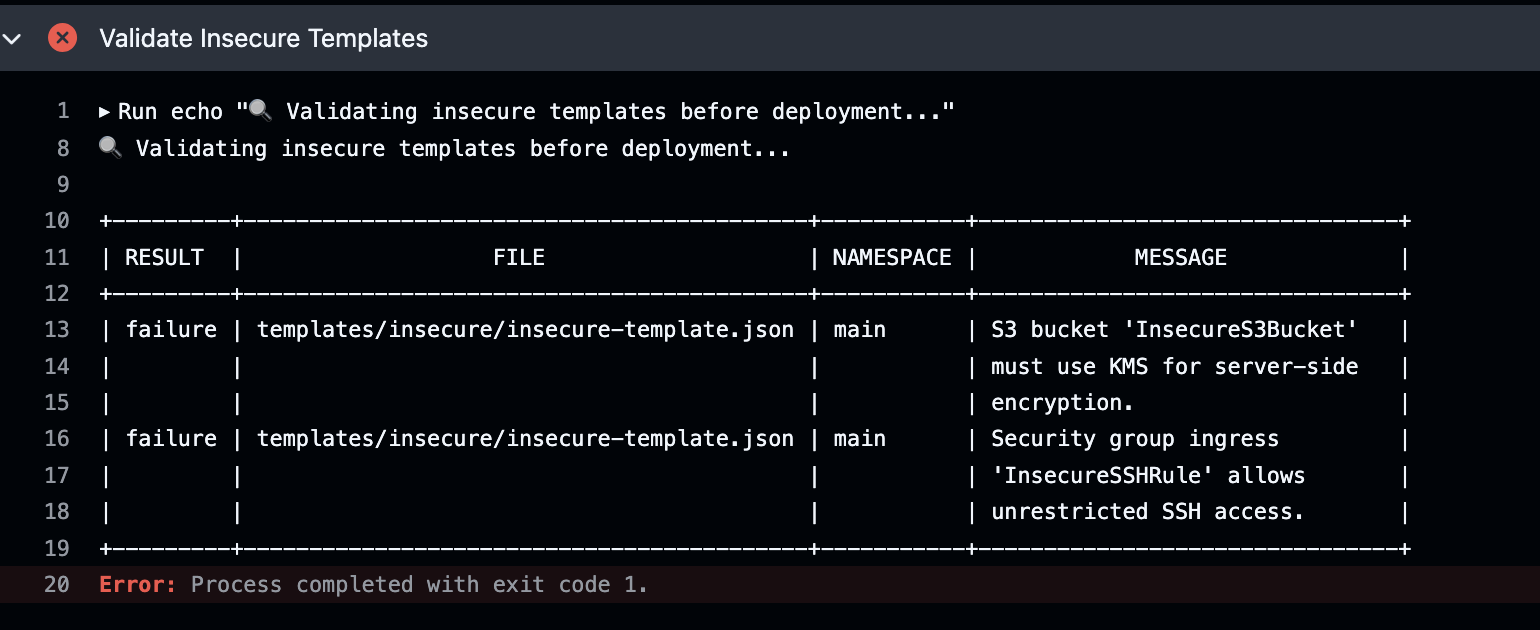

When conftest detects policy violations, it automatically exits with code 1. GitHub Actions sees this non-zero exit code and fails the workflow, blocking the merge.

Demonstrating the Guard: Blocking Insecure Deployments

To showcase how the policy enforcement actually blocks bad infrastructure, I created a separate demo workflow (.github/workflows/test-insecure.yml) that attempts to deploy the insecure template:

name: Demo - Attempt Insecure Deployment

on:

workflow_dispatch:

pull_request:

paths: ['templates/insecure/**', '.github/workflows/test-insecure.yml']

jobs:

attempt-insecure-deploy:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- name: Install Conftest

run: |

wget https://github.com/open-policy-agent/conftest/releases/download/v0.39.0/conftest_0.39.0_Linux_x86_64.tar.gz

tar xzf conftest_0.39.0_Linux_x86_64.tar.gz

sudo mv conftest /usr/local/bin

- name: Validate Insecure Templates

run: conftest test templates/insecure/*.json --policy policies/cloudformation.rego -o table

- uses: aws-actions/configure-aws-credentials@v4

with:

aws-access-key-id: MY_AWS_ACCESS_KEY_ID

aws-secret-access-key: MY_AWS_SECRET_ACCESS_KEY

aws-region: us-east-1

- name: Deploy Insecure Template

run: aws cloudformation deploy --template-file templates/insecure/insecure-template.json --stack-name test-insecure-stack

This workflow demonstrates the security gate in action. When run, conftest detects the missing KMS encryption and exits with a failure code, stopping the workflow before the deployment step ever executes.

Notice how conftest detects the missing encryption property and exits with an error code. This stops the entire workflow, preventing the deployment step from ever running. This is the security gate in action: no insecure infrastructure can reach AWS. The policy enforcement is automatic, non-negotiable, and happens before any deployment commands are executed.

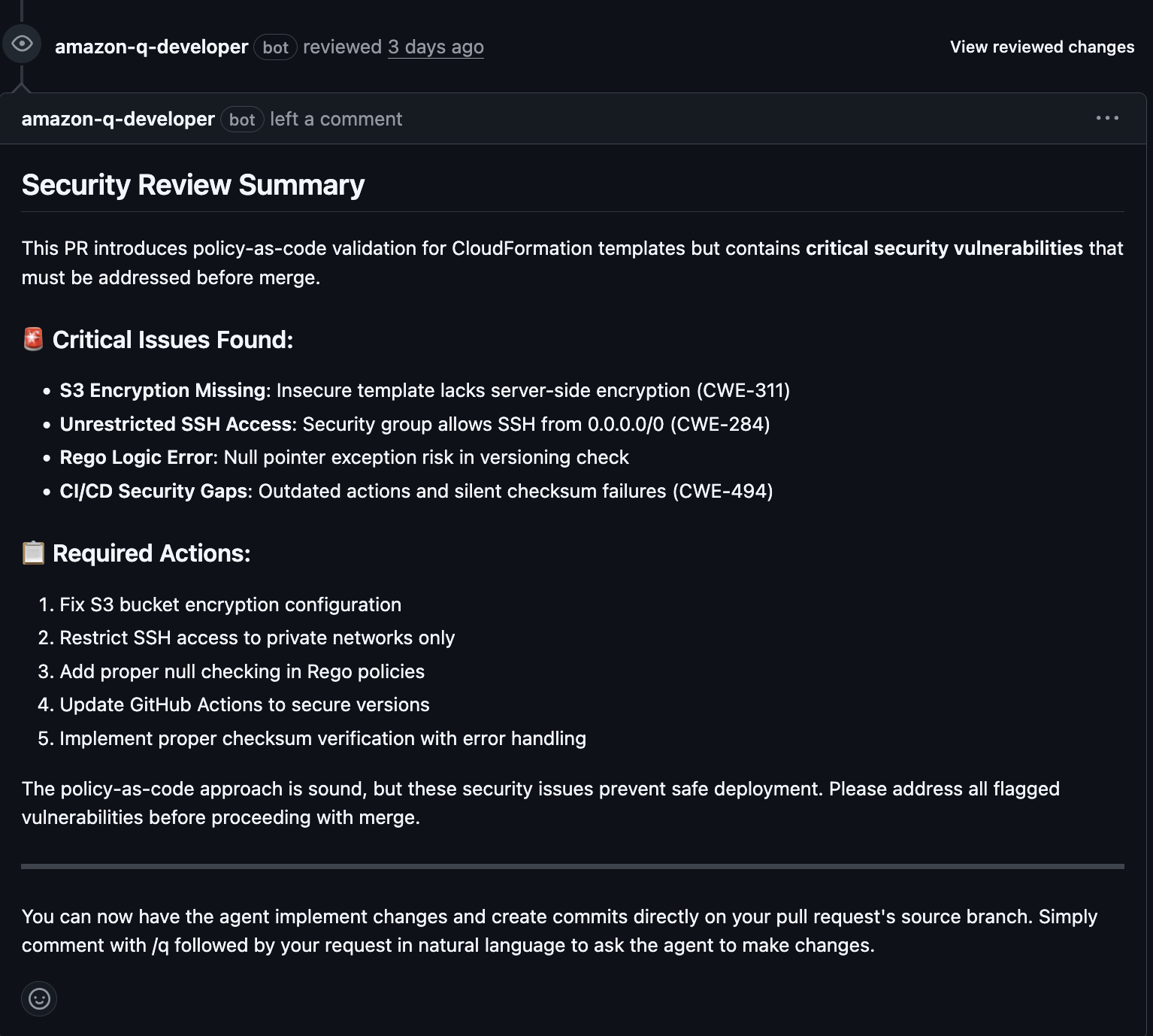

Adding AI-Powered Remediation

I installed the Amazon Q Developer app and taught it what I care about using plain-English rule files in a /.amazonq/rules directory:

Rule 1: Enforce Server-Side Encryption (s3-encryption.md)

## Rule: Enforce Server-Side Encryption

All `AWS::S3::Bucket` resources defined in CloudFormation templates must have server-side encryption enabled.

The `BucketEncryption` property must be present within the resource's `Properties`.

Rule 2: Restrict SSH Access (security-group-ingress.md)

## Rule: Restrict SSH Access

All `AWS::EC2::SecurityGroupIngress` resources that allow SSH (port 22) traffic must not allow unrestricted access from the internet (`0.0.0.0/0`).

The `CidrIp` property must be set to a specific, trusted IP range.

The Amazon Q app reads these instructions and posts detailed remediation advice directly in pull requests.

The Bumps Along the Road: Learning Rego

Getting this working wasn't all smooth sailing. The biggest challenge by far was getting up to speed with Rego. It's an incredibly powerful policy language, but its syntax can feel alien at first.

What made this especially challenging was the state of OPA's documentation. Many of their official resources were broken or out of date:

- Tutorial links returned 404 errors

- Lab environments were completely non-functional

This lack of current learning materials meant I had to find alternative approaches. I spent hours:

- Reading through sparse OPA documentation

- Experimenting with trial-and-error on Rego syntax

- Feeding my half-working policies to AI assistants asking "Why isn't this working?" or "How do I check for this specific property?"

That conversational feedback loop with AI was crucial for learning and getting my policies right when official resources failed me.

This project was a fantastic lesson in combining the strengths of declarative policy engines with modern AI assistants. I ended up with a security workflow that is not only effective but also developer-friendly.

What I Learned

Building this hybrid security workflow taught me several valuable lessons:

OPA/Rego vs. Python Approaches: After building the Python version in Part 1, Rego was a whole different beast. Python feels natural and step-by-step, but Rego makes you think in terms of rules and relationships. I'm still getting the hang of it - there's definitely more to learn before I'd call myself proficient.

CI/CD Integration is Critical: Having policies in code is great, but they're useless if they don't run automatically. Integrating OPA into GitHub Actions created a true security gate that runs on every PR. This "security as code" approach ensures policies are consistently enforced without relying on manual reviews.

AI Tools Augment, Don't Replace: Amazon Q Developer doesn't replace the need for strong policies—it makes them more actionable. The combination works perfectly: OPA provides the strict enforcement (the "Guard"), while Amazon Q provides the helpful guidance (the "Guide"). This hybrid approach gives you both security and developer experience.

I still have a lot to learn. I struggled a bit due to being unfamiliar with git and CI/CD.

Resources

- GitHub Actions for Beginners - A great video that helped me get started with GitHub Actions.

- GitHub Actions Full Course for Beginners - A comprehensive course that provided a deeper understanding of GitHub Actions workflows.